For purpose of this article, when we talk about an AI agent, we mean a goal-oriented system that can plan multi-step work and call tools (APIs, databases, internal apps) to complete tasks. It is not just a chat interface that answers questions.

What do the best public signals say about AI agents in 2026?

- Measured platform usage is already high in large enterprises. Microsoft reports that over 80% of the Fortune 500 is deploying active agents built with Copilot Studio or Agent Builder, based on Microsoft first-party telemetry from the last 28 days of November 2025 and a definition of “active agents” as deployed to production with real activity in the past 28 days.

- Survey data shows many firms are past “curious” and into build and scale. In McKinsey’s global survey (1,993 participants across 105 nations, fielded Jun 25 to Jul 29, 2025), 23% say their organizations are scaling an agentic AI system and 39% say they are experimenting with one.

- Pilots are common, production is harder. ServiceNow and Oxford Economics report that 33% of executives say they are piloting first use cases or have at least one fully functioning agentic AI use case, and 43% say they are considering agentic AI in the next 12 months (survey of just under 4,500 executives; fielding date not stated in the public summary). Deloitte reports a gap between intent and controls: 74% plan to deploy agentic AI within two years, yet 21% say they have a mature governance model (survey of 3,235 leaders across 24 countries, fielded Aug to Sep 2025).

- Builder surveys show many teams already run agents live. In LangChain’s survey (1,340 responses, fielded Nov 18 to Dec 2, 2025), 57.3% report agents running in production environments and 30.4% report active development with plans to deploy.

- Security and control lag behind usage. Microsoft reports 29% of employees have turned to unsanctioned AI agents for work tasks, based on a July 2025 survey of 1,700+ data security professionals commissioned by Microsoft. Microsoft’s Data Security Index reports 47% of organizations say they are applying specific security controls for generative AI (sample details are not publicly listed in the blog post).

What this article is and how to use it

This article is a reference for leaders who need quotable, defensible numbers about AI agents in companies in 2026. It compiles public surveys, analyst notes, and measured platform metrics, then explains what each metric really measures.

Think of this guide as a tool to help you with budgeting, picking your first pilot projects, mapping out security, or deciding whether to build or buy. Just keep in mind that these numbers are a great baseline, not the absolute final word on global agent adoption.

What you'll find in this article

- A curated set of the best available AI agent adoption statistics tied to 2026 planning

- How adoption breaks down by company size, region, and industry (based on the data we actually have)

- The real difference between a "pilot," a "production" deployment, and an "active agent"

- Leading indicators from developer platforms that show where the market is heading

- Tips on how to frame and present agent statistics without misrepresenting what the numbers measure

Definition: what counts as an AI agent in this article

An AI agent is a software system that can take a goal, plan multi-step work, and call tools (like APIs, databases, and internal systems) to complete tasks with limited human input. It can decide what to do next based on intermediate results, not just respond to a single prompt. Agents can run inside a product, an internal workflow, or a developer platform.

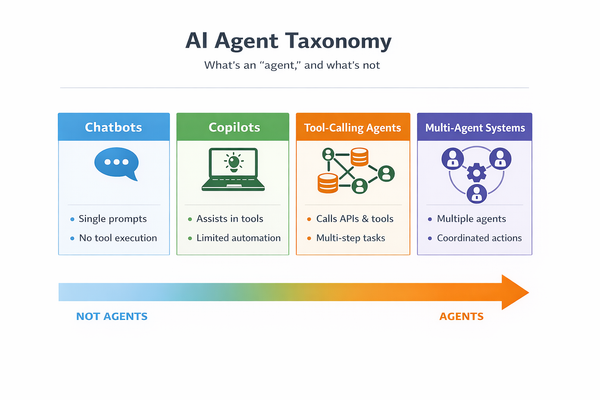

Quick taxonomy

- Chatbots: Conversational interfaces that answer questions or draft text, without reliable tool execution.

- Copilots: Assistants embedded in tools (email, IDEs, docs) that help a user complete work, often with limited automation.

- Tool-calling agents: Systems that can call tools and APIs, follow multi-step plans, and complete tasks end to end with guardrails.

- Multi-agent systems: Multiple agents with distinct roles that coordinate on a task, often via an orchestrator.

What counts as an agent here

- Agents that call tools (ticketing actions, database lookups, CRM updates, scheduling, workflow steps)

- Agents that run multi-step plans with checkpoints, retries, and human approval steps

- Agents with defined permissions and audit trails

What doesn't make the cut

- Plain chat interfaces with no tool calling

- Single-step “prompt in, text out” automations without state, planning, or tool use

- Marketing claims that label any chatbot as an “agent” without tool execution

The best available AI agent adoption statistics for 2026

The problem with “AI agent adoption statistics 2026” is that most public numbers measure one of three things:

- Measured usage inside one ecosystem (strong signal, narrow scope)

- Self-reported surveys (broad scope, weaker measurement)

- Intent and budgets (useful for planning, not the same as usage)

Let’s break it down.

A look at real enterprise usage (Platform data)

Microsoft Security: “Over 80% of the Fortune 500 is deploying active agents.”

- What the data says: “Over 80% of the Fortune 500”

- The scope: Fortune 500 companies using agents built with Microsoft Copilot Studio or Agent Builder

- Where: Global (Fortune 500 scope, Microsoft telemetry)

- Timeframe: Agents in use during the last 28 days of November 2025

- Behind the numbers: Microsoft describes “active agents” as agents deployed to production with real activity in the past 28 days, and states it used first-party telemetry measuring agents built with Copilot Studio or Agent Builder

- Keep in mind: This is not a universal “all agents” measure. It measures activity inside Microsoft’s ecosystem and tooling definitions

- How you can use this: Use it as a benchmark for large-enterprise penetration of production agents in one major enterprise platform. It helps answer, “Are agents still fringe in big companies?”

Microsoft telemetry: regional and industry distribution of active agents (not an adoption percent).

- What the data says: EMEA 42%, United States 29%, Asia 19%, Americas 10% (regional distribution of active agents). Industry shares: software and technology 16%, manufacturing 13%, financial services 11%, retail 9% (share of all active agents)

- The scope: Active agents built with Copilot Studio or Agent Builder

- Timeframe: Last 28 days of November 2025

- Keep in mind: These are shares of observed active agents, not “percent of companies in that region using agents.” They can be influenced by Microsoft footprint and where those tools are sold and used

- How you can use this: Use it to spot where agent activity clusters inside the Microsoft ecosystem, then compare with your own footprint.

The big picture: broad surveys that explicitly mention agentic AI

McKinsey Global Survey: scaling vs experimenting with agentic AI.

- What the data says: 23% say their organizations are scaling an agentic AI system somewhere, and 39% say they are experimenting with one

- Who they asked: 1,993 survey participants across 105 nations (McKinsey Global Survey)

- Timeframe: June 25 to July 29, 2025

- Behind the numbers: McKinsey reports it weighted responses by the contribution of each respondent’s nation to global GDP

- Keep in mind: Self-reported. “Scaling” can mean different things by company. The survey measures the respondent’s view of their organization, not direct telemetry

- How you can use this: Use it to quantify the split between experimentation and scale in a broad, cross-industry sample.

ServiceNow and Oxford Economics: “33% are piloting or have at least one functioning agentic AI use case.”

- What the data says: 33% are piloting first use cases or have at least one fully functioning agentic AI use case; 43% are considering adopting agentic AI in the next 12 months

- Who they asked: Just under 4,500 executives worldwide, across public and private sector organizations

- Behind the numbers: ServiceNow says Oxford Economics and ServiceNow surveyed the executives; the report frames “agentic AI” as autonomous systems that act in pursuit of defined goals

- Keep in mind: Self-reported. The “at least one functioning use case” threshold does not mean broad rollout

- How you can use this: Use it to estimate how many firms say they have moved beyond awareness into pilots or an initial working use case.

Deloitte: intent to deploy agents is high, governance maturity is lower.

- What the data says: 74% of respondents plan to deploy agentic AI within the next two years; 21% say they have a mature governance model for agentic AI

- Who they asked: 3,235 director-level to C-suite respondents across 24 countries and six industries

- Timeframe: August to September 2025

- Keep in mind: This is intent, not confirmed usage. The governance maturity measure is self-reported

- How you can use this: Use it to frame the likely near-term pipeline of agent rollouts, and to justify investment in controls, testing, and monitoring.

KPMG Global Tech Report 2026: investment commitment to agentic AI.

- What the data says: 88% say they are investing in building agentic AI into their systems

- Who they asked: Survey of 2,500 executives from 27 countries and territories, across eight industries

- Where: 29% Asia Pacific, 43% EMEA, 28% Americas (respondent mix)

- Keep in mind: Self-reported investment intent. “Investing” can range from budget planning to active build programs

- How you can use this: Use it to support the claim that agentic AI is moving into budget lines across many regions and industries.

Evidence bucket: developer and build-team signals

LangChain: production agents reported by builders (2026 survey wave):

- What the data says: 57.3% of respondents say they have agents running in production environments; 30.4% say they are actively developing agents with concrete plans to deploy them

- Who they asked: 1,340 survey responses gathered over two weeks from Nov 18 to Dec 2, 2025, spanning engineers, product managers, business leaders, and executives

- Keep in mind: Builder-heavy sample. The report’s demographic section shows a large share of respondents work in technology, so treat this as a “teams building agents” signal, not a census of all companies

- How you can use this: Use it to benchmark builder-side progress and the split between production and near-term deployment.

LangChain: company size split for agents in production (builder survey):

- What the data says: Among 10,000+ employee organizations, 67% had agents in production; among organizations with fewer than 100 people, 50% had agents in production

- Keep in mind: Same builder-heavy sample

- How you can use this: Use it as a directional signal for how production agent work differs by organization size.

LangChain: leading agent use cases (share of reported main deployments):

- What the data says: Customer service 26.5%; research and data analysis 24.4%

- Behind the numbers: Respondents selected one main use case

- Keep in mind: Not the share of all companies. It is the distribution of what respondents picked as the main deployment category

- How you can use this: Use it to shortlist pilot areas with a track record among teams building agents today.

Insights from vendor surveys

CrewAI survey: Fortune 500 executive self-reports about current use and 2026 spend plans.

- What the data says: 65% of Fortune 500 executives report using AI agents in 2025; 79% plan to increase AI agent investments in 2026; 66% have expanded agent use beyond IT to other departments

- Who they asked: 500 C-level executives at organizations with $100M+ revenue and 5,000+ employees, across seven global regions; the survey was fielded in June 2025 (per the release)

- Keep in mind: Vendor-sponsored survey. Respondents are senior leaders, not necessarily hands-on owners of production systems

- How you can use this: Use it as a signal of executive awareness and spend intent, not as a measured count of deployed production agents.

How adoption varies by company size, region, and industry

Finding clear, apples-to-apples comparisons across different demographics is still tricky. While many reports group companies by revenue or headcount, they rarely offer a clean, comparable percentage of who actually has agents running in production.

Here is what the current data tells us:

Company size

- The Salesforce Connectivity Benchmark Report (late 2025), which focused on IT leaders at organizations with over 1,000 employees, further highlights that large enterprises are heavily focused on getting their architecture ready for agents, even if population-wide deployment numbers aren't fully locked down yet.

- LangChain’s late-2025 survey gives us one of the clearest splits. They found that 67% of large organizations (10,000+ employees) report having agents in production, compared to 50% of smaller teams (under 100 people). This gives us a strong directional signal that enterprise teams are slightly ahead in deployment.

Region

Broad, cross-platform adoption rates by region aren't publicly available yet, as most surveys stick to global totals without standardizing their definitions. However, Microsoft’s November 2025 telemetry gives us a great look at where active agents are clustering within their specific ecosystem:

- EMEA leads with 42% of active agents.

- The United States accounts for 29%.

- Asia sits at 19%.

- The Americas (excluding the US) make up 10%.

Industry

We still lack a universal, cross-platform metric for production agents by industry. Most of the data we have right now is either self-reported or tied to specific platforms. Here's what the major reports show:

- According to Microsoft’s telemetry, the highest share of active agents can be found in software and technology (16%), manufacturing (13%), financial services (11%), and retail (9%).

- KPMG’s survey of 2,500 executives across eight major industries found that an overwhelming 88% are investing in building agentic AI, showing that budget allocation is happening across the board, from healthcare to industrial manufacturing.

- McKinsey notes that while tech, media, telecom, and healthcare report strong agentic AI adoption, no single business function has exceeded 10% when it comes to actually scaling these systems.

From pilots to production

Most leaders do not need a single “agent adoption percent.” They need to know how fast teams move from pilots to production, and what tends to stop them.

Here is what the best public signals suggest.

Pilot and production markers are not the same across sources

- McKinsey: distinguishes “experimenting” vs “scaling” an agentic AI system.

- ServiceNow: reports “piloting” and “at least one fully functioning use case.”

- LangChain: reports “agents in production” from builders.

- Microsoft telemetry: defines “active agents” as deployed to production with real activity in the last 28 days, within its platform.

Try to avoid mixing these into one blended number, as they all measure slightly different thresholds.

The gap between experiments and production

Deloitte: experiments reach production slowly in many organizations.

- What the data says: 25% of organizations have moved 40% or more of their AI experiments into production

- The scope: Deloitte State of AI in the Enterprise sample (3,235 leaders across 24 countries)

- Timeframe: Fielded August to September 2025 (published October 2025)

- How you can use this: This is a great benchmark for your own team. If your internal conversion from experiment to deployment feels slow, this data shows that most organizations are facing the exact same hurdle.

LangChain: barriers to production from a builder survey.

- What the data says: 32% cite quality as their main blocker; 20% cite response time as their main blocker

- Good to know: Among organizations with 2,000+ employees in the same survey, security is cited by 24.9% of respondents as a top concern

- How you can use this: This highlights exactly where to focus early engineering efforts. Prioritizing evaluation, latency control, and bringing security teams in from day one (especially in enterprise builds) can help you avoid these common roadblocks

What blocks production

These are the blockers backed by at least one source we used for this analysis:

- Controls lag behind intent. Deloitte reports only 21% say they have a mature governance model for agentic AI, while Microsoft reports 47% of organizations say they apply specific security controls for generative AI.

- Shadow usage grows. Microsoft reports 29% of employees have turned to unsanctioned AI agents for work tasks. This can create risk even when official rollouts move slowly.

- Systems are hard to connect. Salesforce’s Connectivity Benchmark Report survey of 1,050 enterprise IT leaders reports 96% agree AI agent success depends on connecting data across systems, and 94% agree it will require more API-based architecture.

How you can use this: This list of blockers is a great starting point for your production readiness planning. If your team isn't prepared for these hurdles, you might face some delays.

Tooling and platform signals

Tooling signals answer a different question than “How many companies use agents?” Instead, they answer, “Are teams building agents, and is usage rising inside platforms?”

Think of these numbers as leading indicators of where the market is heading.

Platform build signals

Microsoft 365: over 1 million custom agents created in a quarter (2025 baseline).

- What the data says: Customers created more than 1 million custom agents across SharePoint and Copilot Studio in the prior quarter, up 130% quarter over quarter

- Timeframe: Published May 19, 2025

- How you can use this: This serves as a great historical baseline showing the rapid explosion of agent building inside a major ecosystem. Just be careful to pair it with newer data on "active agents" so you aren't confusing agents that were merely "created" with those actually being "used."

Salesforce: Agentforce token volume.

- What the data says: Agentforce surpassed 3.2 trillion tokens (reported in Salesforce’s fiscal 2026 Q3 results press release)

- Keep in mind: Tokens are not “agents,” and one customer can create large token volumes. Treat this as a usage intensity signal inside one vendor stack

- How you can use this: While tokens don't equal agents, this massive number proves that agent-like features are successfully generating huge volumes of model calls in real-world production environments.

Engineering practice signals (builder survey)

These numbers do not measure adoption directly. They show what teams report they put in place when they run agents in production.

LangChain: observability and tracing for agents.

- What the data says: 89% report some form of observability for their agents, while 62% report detailed tracing that lets teams inspect individual agent steps and tool calls

- Good to know: Among respondents who already have agents in production, 94% report some observability and 71.5% report full tracing capabilities

- How you can use this: If you need to convince stakeholders that telemetry, tracing, and incident workflows are mandatory (and not just optional "nice-to-haves") for a production rollout, this is your proof.

LangChain: evaluation and testing practices.

- What the data says: 52.4% report running offline evaluations on test sets; 37.3% report running online evaluations

- Good to know: Among respondents with agents in production, “not evaluating” drops from 29.5% to 22.8%, and online evaluations rise to 44.8%

- How you can use this: You can use these numbers to set a baseline for your own team's testing protocols. Proper evaluation is quickly becoming the industry standard, especially before rolling out any customer-facing use cases.

What these numbers do and do not mean

When navigating the hype around AI, it's easy for these statistics to get misinterpreted. Here is where we see the most common mix-ups.

“AI usage” is not the same as “agent usage”

Many surveys report on “AI in at least one function” or general “gen AI usage.” That can include simple chatbots, content drafting tools, or basic analytics features. An actual agent requires tool execution and multi-step task completion.

When looking at a statistic, it always helps to check if the source:

- Explicitly says “agentic AI” or “AI agents”

- Defines what they mean by the term

- Measures actual tool use, not just chat capabilities

“Pilot” and “Production” mean different things to different people

A pilot can mean:

- A demo project run by one team

- A limited rollout to a small user group

- A real production workflow with limited scope

Production can mean:

- Live, monitored, used weekly

- Deployed, yet unused after launch

- Live with guardrails, audits, and incident response

For example, Microsoft’s telemetry-based definition of an “active agent” is much stricter than what a respondent might mean in a survey. It's best to treat these as completely different thresholds rather than comparing them between.

Understanding the data: Surveys vs. Telemetry

When reading these reports, it helps to remember that every data source has its own blind spots.

- Surveys show intent, but come with bias. Self-reported surveys are fantastic for gauging market direction and executive pipelines. However, they rely heavily on how respondents interpret the questions. Plus, developer surveys naturally attract teams already excited about building agents, while vendor-sponsored surveys often reflect the optimism of their existing customers.

- Telemetry shows reality, but only in one bubble. Measured platform data is incredibly precise because it tracks actual usage, not just opinions. The catch? It only tells you what's happening inside that specific vendor's ecosystem, not the broader market.

The clearest picture emerges when you combine both. Let the broad surveys show you where the market is heading, and use the hard telemetry to verify what is actually being built.

The two stats to take to your next meeting

- For a broad, cross-industry view: Point to McKinsey’s mid-2025 survey, where 23% of organizations reported scaling an agentic AI system, and 39% were experimenting.

- For a large-enterprise, platform-measured view: Look at Microsoft’s November 2025 telemetry, showing that over 80% of the Fortune 500 are deploying active agents in their ecosystem.

How to frame these stats in your own presentations

It’s easy for these numbers to get twisted in pitch decks or board meetings. If you are presenting this data to your team or stakeholders, here are a few accurate ways to frame the market, and a few common traps to avoid.

Solid, accurate ways to phrase it:

- "According to a mid-2025 McKinsey survey, about 23% of respondents mentioned their organizations are scaling agentic AI, while another 39% are still experimenting."

- "Microsoft's recent telemetry shows that over 80% of Fortune 500 companies have active agents deployed in production, specifically using Copilot Studio or Agent Builder."

- "A recent study by ServiceNow and Oxford Economics found that a third of executives are either piloting agentic AI or have at least one working use case, with another 43% eyeing it for the coming year."

Try to steer clear of vague claims like:

- “Most companies use AI agents” (it sounds catchy, but it's too broad and doesn't define the population or what an 'agent' actually is)

- “AI agents are everywhere” (lacks any real metric or context to back it up)

- “Agent adoption is 80%” (this strips away all the important context, like the fact that it only applies to Fortune 500 companies within a specific Microsoft ecosystem)

Partner with Lexogrine

Lexogrine is an AI Agent development company that helps teams build a custom AI agent that fits real workflows, permissions, and data access rules.

If you want an agent that does more than chat, we can build systems that:

- Call tools and APIs to complete multi-step tasks

- Work with internal knowledge bases and structured data

- Support approvals, logging, and audit trails

- Connect with CRM flows, ticketing workflows, contact center operations, and internal service desks

We deliver full-stack builds with React, React Native, Node.js, and cloud delivery on AWS or GCP.

Sources we used for this article

- 80% of Fortune 500 use active AI Agents: Observability, governance, and security shape the new frontier, Microsoft Security, February 10, 2026, https://www.microsoft.com/en-us/security/blog/2026/02/10/80-of-fortune-500-use-active-ai-agents-observability-governance-and-security-shape-the-new-frontier/

- Cyber Pulse: An AI Security Report, Microsoft Security, February 10, 2026, https://www.microsoft.com/en-us/security/blog/2026/02/10/cyber-pulse-an-ai-security-report/

- The state of AI in early 2025: Takeaways from our latest Global Survey, McKinsey & Company, March 12, 2025, https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-in-early-2025-takeaways-from-our-latest-global-survey

- The State of AI in the Enterprise, Deloitte, October 2025, https://www.deloitte.com/global/en/issues/generative-ai/state-of-ai-in-enterprise.html

- Enterprise AI Maturity Index 2025, ServiceNow, 2025, https://www.servicenow.com/workflows/ai/ai-maturity-index.html

- KPMG's Global Tech Report 2026, KPMG, January 2026, https://kpmg.com/za/en/newsroom/press-releases/2026/01/global-tech-report-2026.html

- State of Agent Engineering, LangChain, 2026, https://www.langchain.com/state-of-agent-engineering

- CrewAI Survey Reveals 65% of Fortune 500 Executives Report Using AI Agents in 2025, Business Wire, November 12, 2025, https://www.businesswire.com/news/home/20251112321073/en/CrewAI-Survey-Reveals-65-of-Fortune-500-Executives-Report-Using-AI-Agents-in-2025

- Salesforce Announces 2026 Connectivity Report, Salesforce News, February 5, 2026, https://www.salesforce.com/news/stories/connectivity-report-announcement-2026/

- Introducing Microsoft 365 Copilot Tuning, multi-agent orchestration, and more from Microsoft Build 2025, Microsoft 365 Blog, May 19, 2025, https://www.microsoft.com/en-us/microsoft-365/blog/2025/05/19/introducing-microsoft-365-copilot-tuning-multi-agent-orchestration-and-more-from-microsoft-build-2025/

- Salesforce Third Quarter Fiscal 2026 Results Press Release, Salesforce Investor Relations, December 3, 2025, https://investor.salesforce.com/press-releases/press-release-details/2025/Salesforce-Delivers-Record-Third-Quarter-Fiscal-2026-Results-Driven-by-Agentforce--Data-Cloud/default.aspx

- Gartner Predicts 40% of Enterprise Applications Will Feature Task-Specific AI Agents by 2026, Gartner, August 26, 2025, https://www.gartner.com/en/newsroom/press-releases/2025-08-26-gartner-predicts-40-percent-of-enterprise-applications-will-feature-task-specific-ai-agents-by-2026